[AI Solution (68)] “When AGI arrives, no one will work”… What is Altman’s real aim behind the bombshell remark?

OpenAI CEO Sam Altman has thrown out another bombshell. He warned that once artificial general intelligence, or AGI, emerges, no one will work and the economy will collapse. It is, in effect, the creator of the technology laying out the darkest picture of the very end point of the technology he is building.

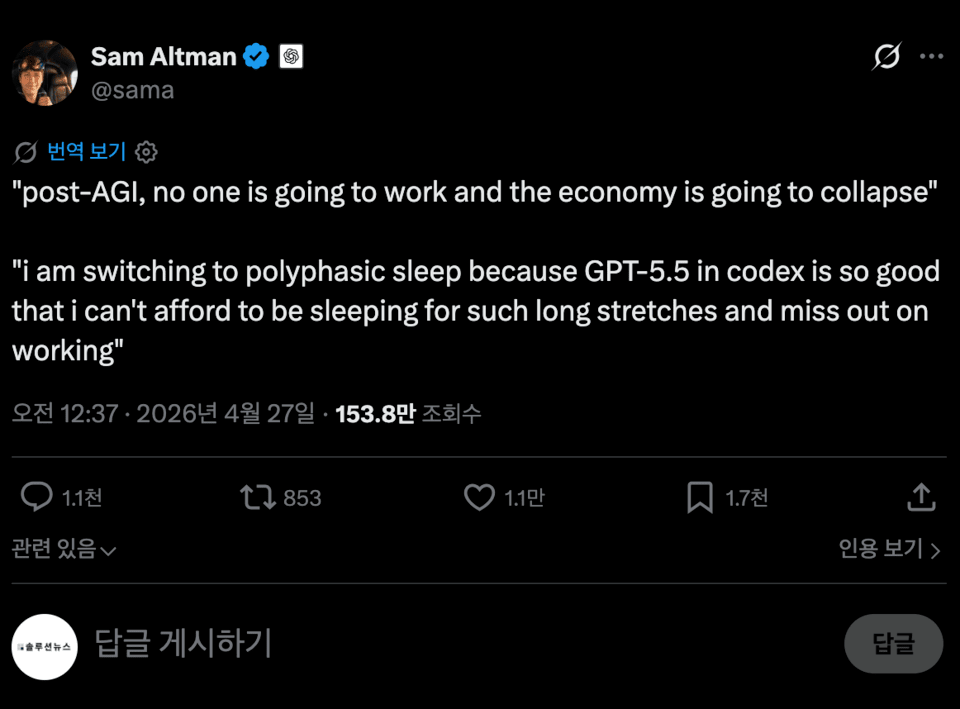

Altman posted a short sentence on X, the social media platform run by Elon Musk: “After AGI, nobody will work and the economy will collapse.” That one-line post shook the global tech industry. AGI refers to next-generation AI capable of performing nearly any task a human can do.

“We need to sleep less”… the CEO’s joke-like but serious remark

The weight of the comment became even clearer in a second post that followed. Altman wrote, “GPT-5.5 in Codex is so good that I can’t afford to waste long stretches on sleep. So I’m going to switch to polyphasic sleep.” Polyphasic sleep is a pattern of sleeping in several short intervals throughout the day.

It may sound like a joke, but the message is heavy. It is a confession that the speed of AI development has reached a point where even human biological rhythms feel pressured. The faster AI works, the more humans trying to keep up will sleep less and work longer. At the end of that path, a self-contradictory scene emerges in which humans ultimately lose their jobs.

Altman’s post came just after OpenAI unveiled its new model, GPT-5.5. The company described GPT-5.5 as “the most capable and easiest-to-use model we have built so far.” According to the company, it can handle writing, coding, research, and data analysis, and it can independently make plans, use tools, and verify results without step-by-step instructions.

OpenAI President Greg Brockman described GPT-5.5 as “a new kind of intelligence.” It can complete complex tasks with little human intervention and marks a significant advance in processing speed and efficiency, according to the company.

It is currently being offered to some paid users on ChatGPT and Codex, including Plus, Pro, Business, and Enterprise subscribers. A more powerful GPT-5.5 Pro version is also set to roll out soon to some users.

9 a.m. to 6 p.m. commutes: cracks in a familiar routine

AI experts say the very model of working that comes with set commuting hours is gradually changing. Signs of that shift are already visible everywhere. Tasks that once required an entire team are increasingly being handled by a single person using AI tools.

Productivity has risen, but side effects have followed. More people are staying online longer and working more in order to keep pace with AI’s speed. It is the paradox of a tool for efficiency turning into a driver of greater labor intensity. The scene Altman described when he said he would “sleep less” is already playing out across Silicon Valley.

If AI replaces existing jobs faster than it creates new ones, the outcome is obvious: a domino effect of job gaps, widening income inequality, and weakening consumption. The “economic collapse” Altman referred to points to exactly that scenario.

Not everyone agrees

That said, Altman’s diagnosis is not universally accepted. There is also a strong counterargument that even if some jobs disappear, new ones will be created at the same scale.

Some believe humans will still be needed in areas involving creativity, judgment, and real-world decision-making. History also offers support for that view: with every technological revolution, similar fears have arisen, yet labor markets have eventually found a new equilibrium.

But there is another voice saying this time is different. AGI is not merely an automation tool, but a technology that can replace broad aspects of human cognition. Given the aggressive model competition among companies such as OpenAI, Anthropic, Meta, and Nvidia, expectations that AGI is not far off are gaining traction.

Of course, AGI has not yet arrived. Its timing remains uncertain. For that reason, Altman’s statement is closer to a warning than a prophecy. It is a kind of social shock therapy, in which the darkest scenario of the technology he created is held up in advance.

What should be prepared to avoid collapse? (the solution)

Repeating warnings alone will not produce answers. The real issue has already shifted to who will benefit from the fruits of AI-driven productivity, and how.

The hottest topic is redesigning the tax structure. Human labor is heavily taxed, but the wealth generated when AI does the work instead is taxed only loosely.

That is why Bill Gates raised the idea of a “robot tax” early on, and why Altman himself once mentioned strengthening taxes on AI companies and capital as a funding source for universal basic income, or UBI. As AI rises as a producer, the center of gravity in taxation will have to move from labor toward capital and algorithms.

The next issue is redefining working hours. The idea is to convert higher productivity into fewer working hours rather than more output.

Finland and Iceland tested a four-day workweek and found higher happiness without a decline in productivity, and some companies in the United Kingdom are following the same path. In Korea, Gyeonggi Province and some IT companies have also begun experimental adoption. The background to this trend is a practical realization that if working hours are not reduced, AI efficiency will simply become another tool for pushing people harder.

The most fundamental issue is to redefine human roles themselves. Standardized office work is being rapidly absorbed by AI, leaving room for areas that involve unstructured judgment, interpersonal relationships, emotion, and ethics.

That is why healthcare and caregiving, education, creative work, and face-to-face services are emerging as new human domains. The era in which one major can sustain a lifetime is ending. That is why there is growing demand to upgrade vocational training into a lifelong learning system and to move toward a model in which the state supports job transitions.

The Korean government is not standing outside this trend. Policies such as enactment of the Basic AI Act, the announcement of a Digital Bill of Rights, and the introduction of AI textbooks are all being pushed forward in sequence. So far, however, the weight of the discussion has leaned toward balancing industrial promotion and regulation. Altman’s comments pose the next set of questions: when AI shakes the social structure itself, what kind of safety net will Korea build, and how quickly?

Of course, AGI has not yet arrived. Its timing remains uncertain. For that reason, Altman’s statement is closer to a warning than a prophecy.

It is a kind of social shock therapy, in which the darkest scenario of the technology he created is held up in advance. Perhaps that is where the real aim lies.

It is a paradoxical plea to find a way not to collapse, starting from the assumption that collapse may come. No one yet has the answer. But the question has become clear.

A question posed to Korea

The same question is being asked in Korean society. Office sectors such as coding, writing, call-center support, and accounting are already within AI’s sphere of influence.

The issue of how the familiar 9-to-6 workday will change over the next decade, and how Korea’s labor market and education system should prepare for that shift, is emerging as a major topic.

How should we tax the wealth created by AI? How should the income of people who lose their jobs be protected? Is a world in which humans no longer need to work truly a happy one?

The one-line message Altman posted ultimately leads to these heavy questions. No one yet has the answers.