The source code was open for 13 months, throughout 363 updates.

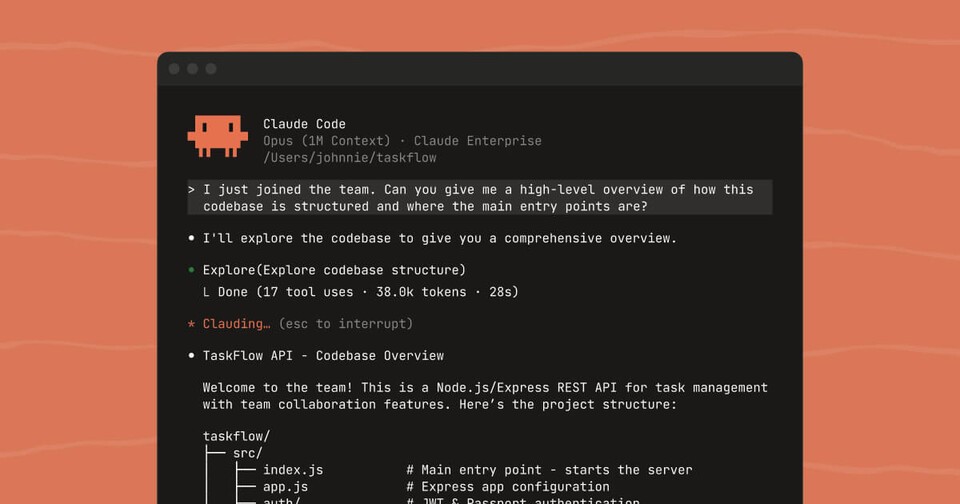

Anthropic’s AI coding tool Claude Code, launched in February last year, had its entire source code exposed externally from day one. This was due to a sourcemap tucked inside an installation file uploaded to npm, a developer package distribution platform.

The sourcemap serves as a recovery key to revert compressed code back to its original form. It’s intended for developer convenience but mistakenly was released with the distribution file, allowing anyone to download the package and unzip it to view the entire source.

Anthropic realized the mistake and deleted the version within about two hours, but it was already too late.

On the day of discovery, the code had already spread across the internet.

On the morning of February 25th last year, Daniel Nakov, CTO of AI startup INVISR, extracted the source code and uploaded it on GitHub. That same day, related threads emerged on the developer community Hacker News, attracting hundreds of comments.

Engineer Dave Shoemaker from the real estate platform Zillow opened the file only to find that the code had disappeared during his outing. It was erased from the npm repository and cache, but by pressing the undo function in the text editor Sublime Text, the code was restored. He disclosed his actions in a blog post on February 27th.

The analysis quickly accumulated. By March 1st, a methodology for reverse-converting compressed JavaScript into TypeScript using AI was made public. On March 7th, detailed technical analyses emerged regarding the system prompt, language parsing structure, and AWS Bedrock integration method. By March 30th, comprehensive technical analyses on the task execution loop, built-in tool list, and authority system had been published.

This repeated on March 30-31st this year, 13 months later, when UC Berkeley security researcher Chaofan Shou discovered a 59.8 MB sourcemap file in the latest version of Claude Code (2.1.88). While the file was hidden inside last year, this time it was prominently attached as a separate file. It remains unclear when it was reintroduced during the 363 updates.

Researchers unearthed many elements… before official announcements.

Inside the source code were numerous elements Anthropic hadn’t officially announced. On January 24th this year, developer Mike Kelly discovered a multi-agent system called ‘TeammateTool’ in version 2.1.19.

Codenamed ‘Swarms,’ a detailed analysis surfaced in the community two days later, and Anthropic’s official announcement did not come until two weeks later on February 6th. In essence, the product roadmap was disclosed externally first.

More undisclosed features were identified by researchers. ‘Kairos’ was an assistant mode, ‘Buddy System’ was an April Fool’s Tamagotchi feature, and ‘Undercover mode’ was a mode to hide internal information. There were 83 undocumented environment variables, and the entire system prompt and 18 built-in tool descriptions were extracted by version into a GitHub repository for auto-updates.

Security loopholes were also discovered externally. In February this year, Check Point Research published two vulnerabilities. One flaw allowed remote control over a developer’s computer simply by cloning a malicious repository, and another enabled API key theft. Although Anthropic patched these issues, it’s possible that the source code exposure facilitated the discovery of such vulnerabilities.

Why wasn’t it prevented… the answer lies in the structure.

Even with the code open, the product’s growth is attributed to the structure of Claude Code. Claude Code is a client program that sends user commands to Anthropic’s server and receives responses.

The core AI model resides within the server. Even if someone takes the entire source code, they cannot do anything without API access permission. It’s like copying a TV remote but not being able to change channels without the TV.

Competitors do not copy this code. AI coding agents like Cline, Goose, and Aider refer only to the structure for their implementation. They understand “this is how it’s built,” and then code everything afresh from scratch.

It’s similar to an architect observing a competitor’s building for design inspiration rather than copying the blueprints directly.

Claude Code isn’t an open-source project that anyone can modify and distribute; it’s proprietary licensed software. Unauthorized copying and use of the code can result in legal accountability.

Anthropic is also pursuing legal actions. On the 19th of last month, they filed a lawsuit against a third-party tool ‘OpenCode,’ which bypassed the internal API of Claude Code, allowing the $200/month Max subscribers to use tokens more cheaply. In February this year, they amended the terms of service to explicitly prohibit third-party tool use of Claude. OpenCode deleted the related plugin in version 1.3.0.

Implications… a new transparency model in the AI era.

This incident goes beyond a mere security error. It questions how AI products should exist.

In the traditional software world, source code leaks were fatal because competitors could use the code directly. However, the Claude Code case is different. If the server model is the essence of competitiveness, even open source code poses no threat but serves as a foundation for ecosystem formation. Indeed, around Claude Code, a third-party ecosystem of internal tool catalogs, version tracking systems, and visualization tools has flourished, garnering thousands of stars.

This suggests a possible approach for AI companies. A midpoint is viable, where the model and infrastructure remain closed, and client code is virtually open, encouraging contributions and oversight from the community simultaneously.

However, challenges remain. The fact that external sources discovered the vulnerabilities highlights the limitations of internal validation systems. The more the source code is open, the easier it becomes for malicious actors to understand the structure. Anthropic’s failure to officially explain the circumstances leading to the redeployment of the sourcemap detracts from trust aspects.

The code is open, and ownership is closed. One thing the 13-month experiment confirmed is that in the AI era, the moat around products lies not in the code but in the model and infrastructure.